Click "Load 3D" to load the model. Drag to rotate, scroll to zoom. Drag the slider to compare textured and geometry views.

1BNRist, Department of Computer Science and Technology, Tsinghua University 2Tencent ARC Lab 3Victoria University of Wellington

*Project lead. †Corresponding author.

Video coming soon.

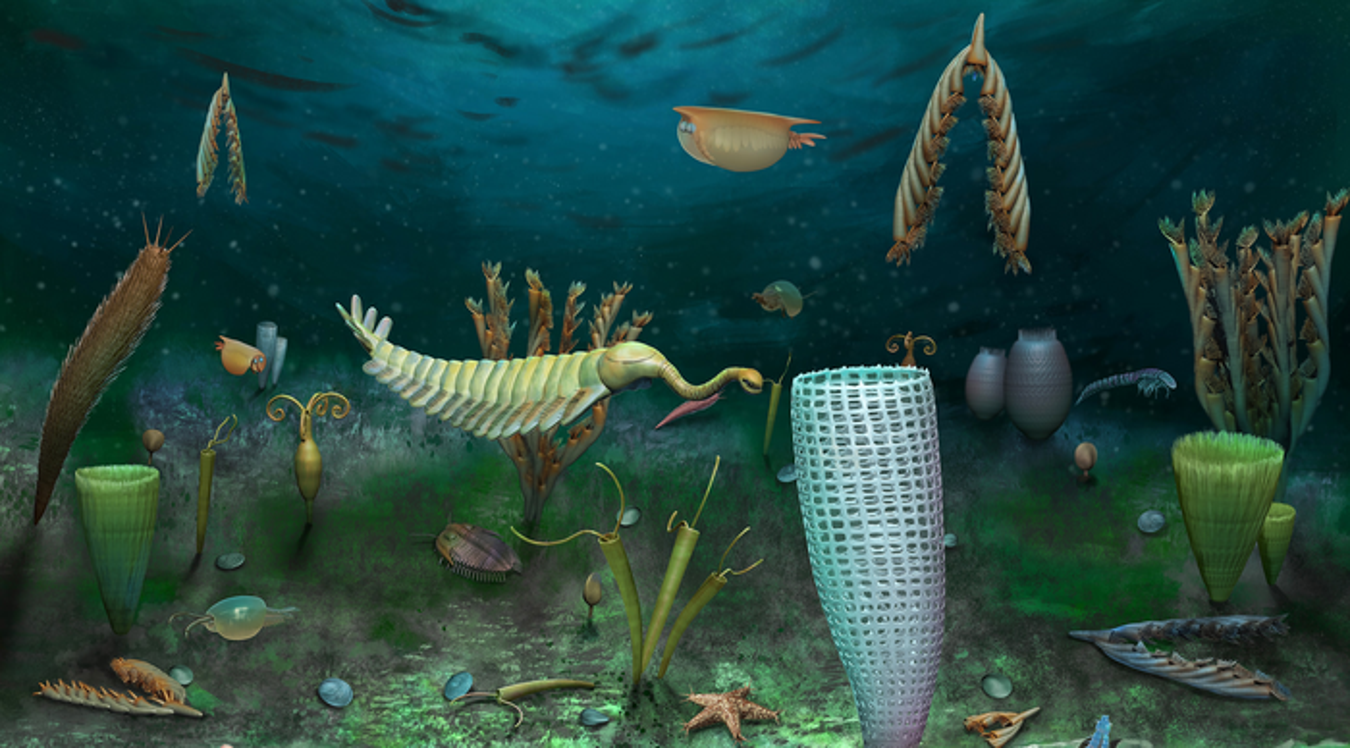

Recent advances in 3D generative models have rapidly improved image-to-3D synthesis quality, enabling higher-resolution geometry and more realistic appearance. Yet fidelity, which measures pixel-level faithfulness of the generated 3D asset to the input image, still remains a central bottleneck. We argue this stems from an implicit 2D-3D correspondence issue: most 3D-native generators synthesize shapes in canonical space and inject image cues via attention, leaving pixel-to-3D associations ambiguous. To tackle this issue, we draw inspiration from 3D reconstruction and propose Pixal3D, a pixel-aligned 3D generation paradigm for high-fidelity 3D asset creation from images. Instead of generating in a canonical pose, Pixal3D directly generates 3D in a pixel-aligned way, consistent with the input view. To enable this, we introduce a pixel back-projection conditioning scheme that explicitly lifts multi-scale image features into a 3D feature volume, establishing direct pixel-to-3D correspondence without ambiguity. We show that Pixal3D is not only scalable and capable of producing high-quality 3D assets, but also substantially improves fidelity, approaching the fidelity level of reconstruction. Furthermore, Pixal3D naturally extends to multi-view generation by aggregating back-projected feature volumes across views. Finally, we show pixel-aligned generation benefits scene synthesis, and present a modular pipeline that produces high-fidelity, object-separated 3D scenes from images.

Click "Load 3D" to load the model. Drag to rotate, scroll to zoom. Drag the slider to compare textured and geometry views.

Click "Load 3D" to load models. Each row shares synchronized rotation and slider. Drag to rotate, scroll to zoom.

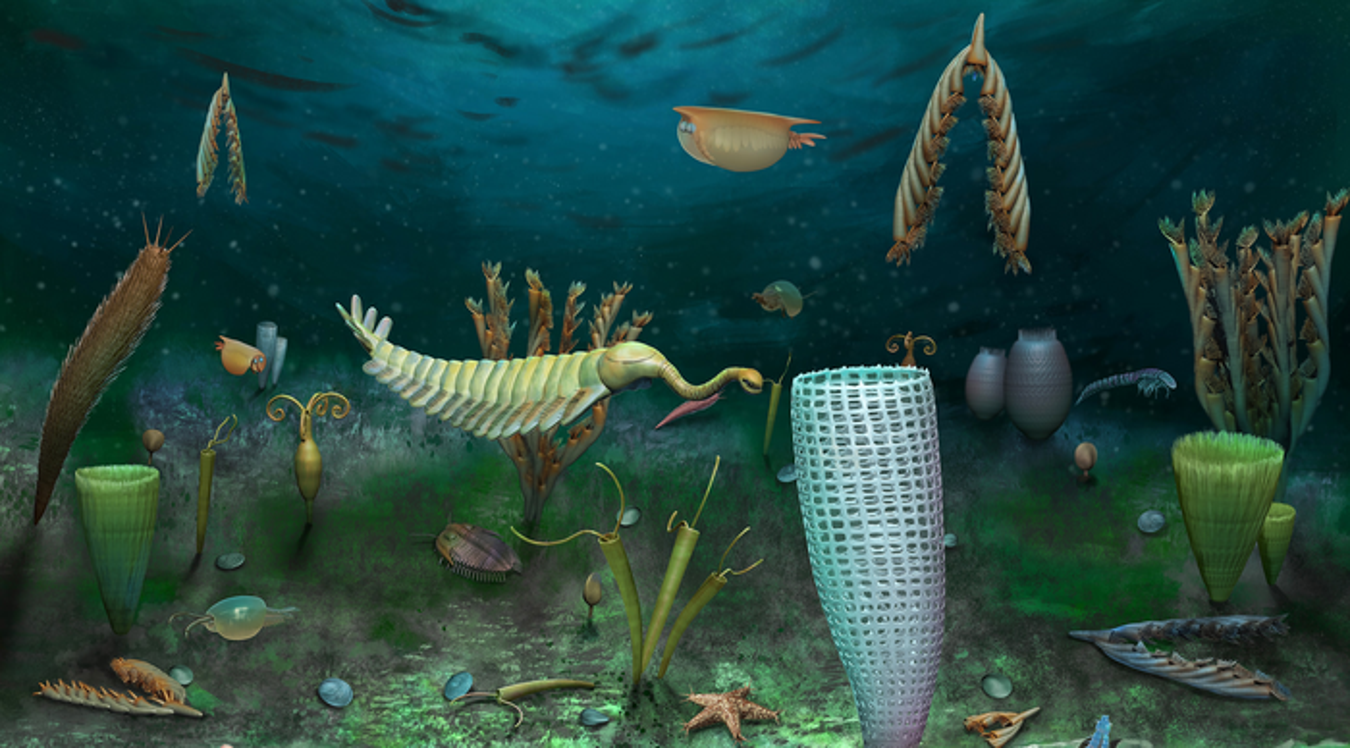

Pixal3D consists of three key components: (1) Pixel-Aligned Structured Latent Representation Learning using a VAE to compress pixel-aligned sparse SDF into efficient sparse latents; (2) an Image Back-Projection-based Conditioner that explicitly lifts 2D image features into 3D feature volumes; and (3) a two-stage generative process conditioned on these volumes to predict coarse structure and detailed latents, decoded into a high-fidelity mesh.

Fig. 2. Overview of the Pixal3D framework.

If you find Pixal3D useful in your research, please consider citing our paper.

@article{li2026pixal3d,

title = {Pixal3D: Pixel-Aligned 3D Generation from Images},

author = {Li, Dong-Yang and Zhao, Wang and Chen, Yuxin and Hu, Wenbo and Guo, Meng-Hao and Zhang, Fang-Lue and Shan, Ying and Hu, Shi-Min},

year = {2026}

}